Featured

Table of Contents

Select a device, then ask it to complete a project you would certainly give your pupils. What are the results? Ask it to change the task, and see exactly how it reacts. Can you determine possible locations of worry for scholastic stability, or opportunities for pupil understanding?: Exactly how might trainees use this innovation in your course? Can you ask trainees exactly how they are currently utilizing generative AI tools? What clearness will students need to differentiate between appropriate and inappropriate uses of these devices? Take into consideration exactly how you may adjust projects to either integrate generative AI right into your training course, or to recognize areas where pupils might lean on the technology, and turn those locations right into possibilities to encourage much deeper and more important reasoning.

Be open to remaining to discover more and to having ongoing discussions with colleagues, your department, individuals in your discipline, and even your students concerning the influence generative AI is having - AI training platforms.: Choose whether and when you want pupils to utilize the modern technology in your courses, and clearly communicate your criteria and expectations with them

Be transparent and direct regarding your expectations. Most of us desire to discourage students from using generative AI to finish assignments at the cost of learning critical abilities that will influence their success in their majors and occupations. We would certainly additionally such as to take some time to focus on the possibilities that generative AI presents.

We additionally suggest that you take into consideration the availability of generative AI tools as you explore their prospective usages, especially those that trainees might be needed to connect with. Ultimately, it is essential to think about the ethical considerations of using such tools. These subjects are fundamental if taking into consideration using AI devices in your assignment style.

Our goal is to support faculty in improving their training and finding out experiences with the latest AI modern technologies and devices. We look forward to providing various opportunities for specialist advancement and peer learning.

Ai Consulting Services

I am Pinar Seyhan Demirdag and I'm the founder and the AI supervisor of Seyhan Lee. Throughout this LinkedIn Knowing training course, we will certainly discuss exactly how to utilize that device to drive the production of your intent. Join me as we dive deep into this new innovative transformation that I'm so thrilled about and let's find together just how each of us can have a place in this age of sophisticated innovations.

A neural network is a method of processing information that mimics organic neural systems like the links in our very own brains. It's just how AI can create connections among apparently unassociated sets of details. The concept of a semantic network is carefully pertaining to deep learning. Just how does a deep knowing design use the neural network idea to attach information points? Beginning with how the human mind jobs.

These nerve cells use electric impulses and chemical signals to interact with one another and transmit details in between various locations of the mind. A man-made neural network (ANN) is based on this organic phenomenon, however formed by artificial nerve cells that are made from software application modules called nodes. These nodes use mathematical computations (instead of chemical signals as in the mind) to interact and send details.

What Is Ai's Role In Creating Digital Twins?

A big language design (LLM) is a deep knowing version trained by applying transformers to a huge set of generalised information. LLMs power numerous of the preferred AI conversation and text tools. One more deep understanding strategy, the diffusion design, has actually proven to be an excellent fit for picture generation. Diffusion versions discover the process of transforming an all-natural picture into blurry visual noise.

Deep understanding versions can be explained in specifications. An easy credit report prediction design educated on 10 inputs from a finance application would have 10 specifications. By contrast, an LLM can have billions of parameters. OpenAI's Generative Pre-trained Transformer 4 (GPT-4), among the structure designs that powers ChatGPT, is reported to have 1 trillion specifications.

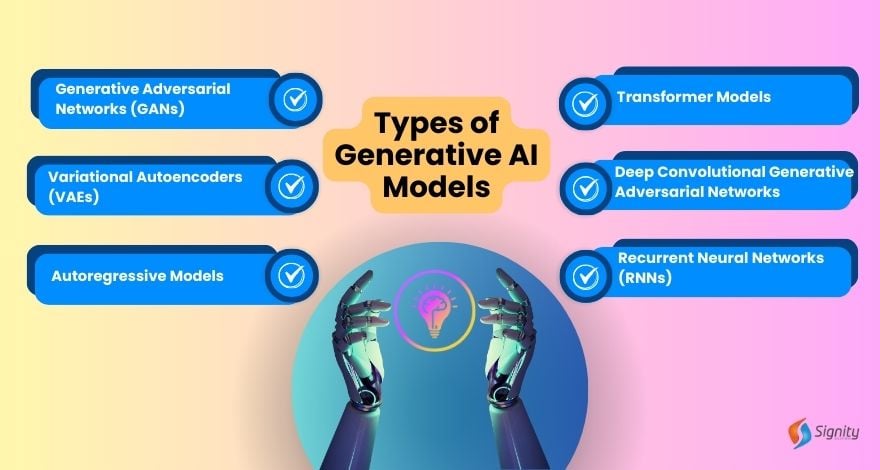

Generative AI describes a group of AI algorithms that generate brand-new outputs based upon the data they have actually been trained on. It uses a kind of deep discovering called generative adversarial networks and has a large range of applications, consisting of producing pictures, text and sound. While there are concerns about the influence of AI at work market, there are additionally possible benefits such as maximizing time for people to concentrate on more imaginative and value-adding job.

Excitement is building around the opportunities that AI devices unlock, yet exactly what these tools are qualified of and how they function is still not widely comprehended (AI for mobile apps). We can create about this carefully, yet given how advanced devices like ChatGPT have become, it just seems ideal to see what generative AI has to claim about itself

Whatever that follows in this post was produced utilizing ChatGPT based upon details motivates. Without additional trouble, generative AI as explained by generative AI. Generative AI modern technologies have taken off into mainstream consciousness Picture: Visual CapitalistGenerative AI describes a classification of artificial knowledge (AI) algorithms that generate new results based upon the data they have been trained on.

In straightforward terms, the AI was fed info about what to cover and after that produced the short article based on that details. To conclude, generative AI is a powerful device that has the possible to change several sectors. With its capacity to produce brand-new web content based upon existing information, generative AI has the potential to transform the way we produce and eat material in the future.

Multimodal Ai

The transformer design is much less matched for various other kinds of generative AI, such as picture and audio generation.

The encoder compresses input information into a lower-dimensional space, called the latent (or embedding) space, that maintains the most necessary elements of the information. A decoder can after that utilize this pressed representation to rebuild the initial data. When an autoencoder has actually been trained in by doing this, it can use unique inputs to create what it thinks about the suitable results.

The generator makes every effort to develop reasonable data, while the discriminator intends to differentiate between those generated outcomes and genuine "ground fact" results. Every time the discriminator catches a generated result, the generator utilizes that comments to attempt to improve the top quality of its outcomes.

In the instance of language designs, the input is composed of strings of words that comprise sentences, and the transformer predicts what words will come following (we'll get involved in the details below). In addition, transformers can process all the elements of a sequence in parallel as opposed to marching through it from beginning to finish, as earlier kinds of designs did; this parallelization makes training quicker and extra reliable.

All the numbers in the vector represent various aspects of words: its semantic significances, its relationship to various other words, its regularity of use, and so forth. Similar words, like elegant and fancy, will have comparable vectors and will certainly also be near each various other in the vector room. These vectors are called word embeddings.

When the model is producing message in action to a punctual, it's using its anticipating powers to choose what the next word should be. When producing longer pieces of message, it predicts the following word in the context of all words it has created so far; this feature boosts the comprehensibility and connection of its writing.

Latest Posts

Ai In Healthcare

Is Ai The Future?

Ai-generated Insights